Hilbert-Schmidt Independence Criterion Lasso (HSIC Lasso)

Introduction

The goal of supervised feature selection is to find a subset of input features that are responsible for predicting output values. The least absolute shrinkage and selection operator (Lasso) allows computationally efficient feature selection based on linear dependency between input features and output values. In this project, we consider a feature-wise kernelized Lasso for capturing non-linear input-output dependency. We first show that, with particular choices of kernel functions, non-redundant features with strong statistical dependence on output values can be found in terms of kernel-based independence measures. We then show that the globally optimal solution can be efficiently computed; this makes the approach scalable to high-dimensional problems.

Main Idea

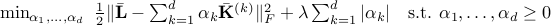

The HSIC Lasso is given as the following form

where  is the Frobenius norm,

is the Frobenius norm,  is the centered Gram matrix computed from

is the centered Gram matrix computed from  -th feature, and

-th feature, and  is the centered Gram matrix computed from output

is the centered Gram matrix computed from output  .

.

To compute the solutions of HSIC Lasso, we use the dual augmented Lagrangian (DAL) package.

Features

Can select nonlinearly related features.

Highly scalable w.r.t. the number of features.

Convex optimization.

Download

Usage

Download the source code.

Run the script (demo_HSICLasso.m).

Acknowledgement

I am grateful to Prof. Masashi Sugiyama and Dr. Leonid Sigal for their support in developing this software.

Contact

I am happy to have any kind of feedbacks. E-mail:

Reference

Yamada, M., Jitkrittum, W., Sigal, L., Eric P. Xing & Sugiyama, M.

High-Dimensional Feature Selection by Feature-Wise Non-Linear Lasso.

arXiv:1202.0515. [paper]