Communication and Human Science

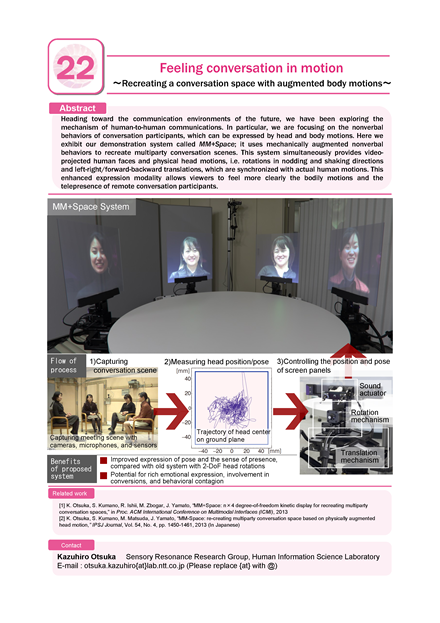

Feeling conversation in motion

- Recreating a conversation space with augmented body motions -

Abstract

Heading toward the communication environments of the future, we have been exploring the mechanism of human-to-human communications. In particular, we are focusing on the nonverbal behaviors of conversation participants, which can be expressed by head and body motions. Here we exhibit our demonstration system called MM+Space; it uses mechanically augmented nonverbal behaviors to recreate multiparty conversation scenes. This system simultaneously provides video-projected human faces and physical head motions, i.e. rotations in nodding and shaking directions and left-right/forward-backward translations, which are synchronized with actual human motions. This enhanced expression modality allows viewers to feel more clearly the bodily motions and the telepresence of remote conversation participants.

Photos

Poster

Map

Presentor

Kazuhiro Otsuka

Human Information Science Laboratory

Human Information Science Laboratory

Ryou Ishii

Human Information Science Laboratory

Human Information Science Laboratory

Junji Yamato

Media Information Laboratory

Media Information Laboratory