| 17 |

Real-time speech emotion contololler using faceEmotional voice conversion via facial expression recognition

|

|---|

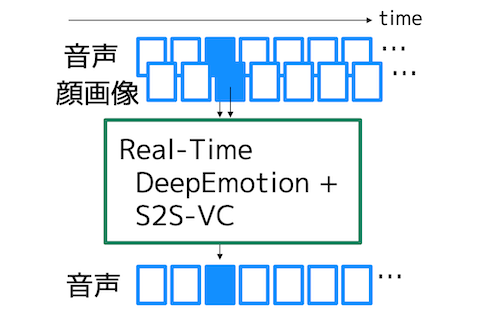

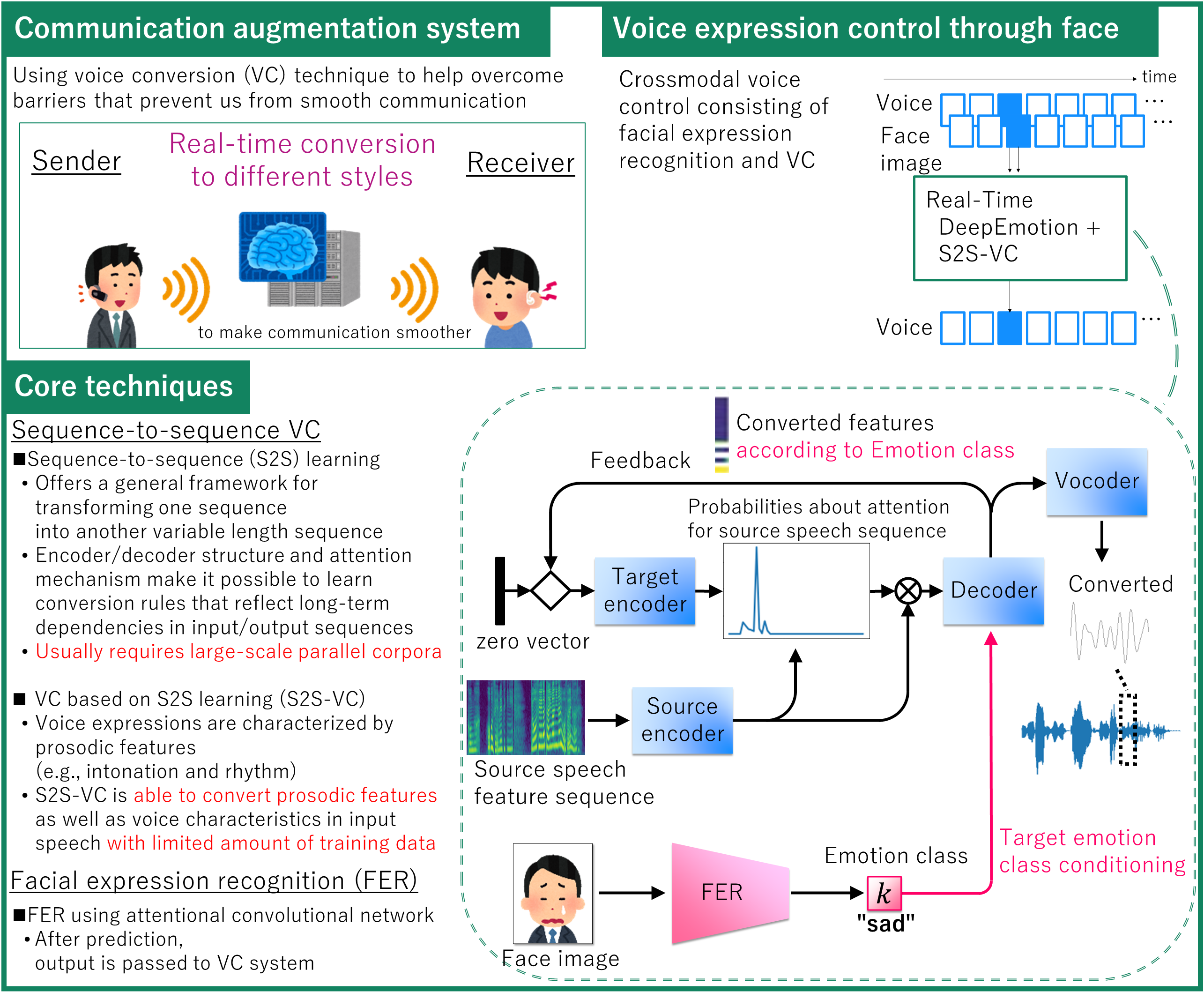

There are many kinds of physical or mental barriers that prevent individuals from smooth verbal communication. One key technique to overcome some of these barriers is voice conversion (VC), a technique to convert para/non-linguistic information contained in a given utterance without changing the linguistic information. Here, we propose a crossmodal voice control system, which offers a way to control the vocal expression of emotion in speech through the facial expression in a face image. The proposed system consists of performing facial expression recognition (FER) followed by VC. For VC, we have developed a method based on sequence-to-sequence (S2S) learning, which is designed to convert the prosodic features as well as the voice characteristics in speech conditioned on the output of the FER system. We believe that this work can provide some insight on what it is like to be able to control our voice through different modalities.

[1] H. Kameoka, K. Tanaka, T. Kaneko, N. Hojo, “ConvS2S-VC: Fully convolutional sequence-to-sequence voice conversion,” IEEE/ACM Transactions on Audio, Speech, and Language Processing, pp. 1849-1863, June 2020.

[2] K. Tanaka, H. Kameoka, T. Kaneko, N. Hojo, “AttS2S-VC: Sequence-to-sequence voice conversion with attention and context preservation mechanisms,” in Proc. 2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP2019), pp. 6805-6809, May 2019.

[3] M. Shervin, M. Minaei, and A. Abdolrashidi, “Deep-emotion: Facial expression recognition using attentional convolutional network.” Sensors 21.9:3046, 2021.

Kou Tanaka / Recognition Research Group, Media Information Laboratory

Email: cs-openhouse-ml@hco.ntt.co.jp